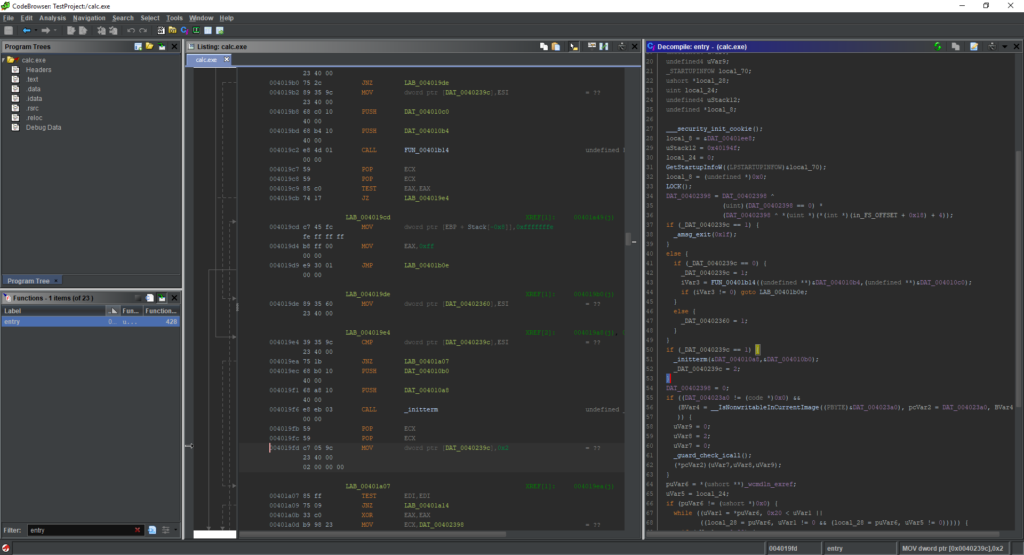

GHIDRACULA

Not so long ago, the NSA released their reverse engineering tool named GHIDRA. After a quick glance, it seems like an impressive tool. But sadly it does not really support darker themes, making it a bit too bright when working with it for long hours.

After making some minor patches, I’m proud to release the first quick-and-dirty version of GHIDRACULA. It is not perfect yet, but it is a good starting point.

In order to generate this “theme”, I based most of the editor’s theme by using GHIDRA Darknight and modifying the color scheme so it will fit the Darcula Look and Feel.

Some important notes:

- Currently other themes are not supported. In order to revert back to the old color schemes you will have to extract the Docking.jar from the original GHIDRA installation.

- Not everything is converted to the new color scheme, if something is bothering you, please let me know and I’ll try to make time to fix it.

- Please share your notes!

- CURRENTLY SUPPORTING ONLY WINDOWS!

- Due to a known issue in Darcula when using Linux with Java 11.

How to install?

- Download the GHIDRACULA tarball and extract it.

- Make sure that GHIDRA is closed.

- Execute the install.py python script.

- When prompt choose the GHIDRA installation directory.

- If the script will not be able to find the _code_browser.tcd file you will be requested to provide its location manually.

- Relaunch GHIDRA.

Source Code

In order to generate the Docking.jar you can simply apply this patch file on the source. You can download the Darcula theme and compile it from the Darcula GitHub.